Inside Waymo's Secret World for Training Self-Driving Cars

A look at how Alphabet understands its most ambitious artificial intelligence project

In a corner of Alphabet’s campus, there is a team working on a piece of software that may be the key to self-driving cars. No journalist has ever seen it in action until now. They call it Carcraft, after the popular game World of Warcraft.

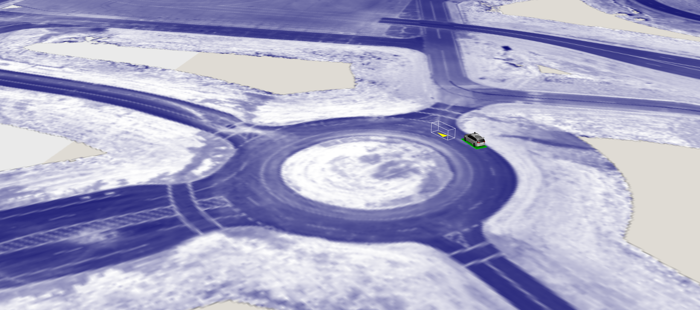

The software’s creator, a shaggy-haired, baby-faced young engineer named James Stout, is sitting next to me in the headphones-on quiet of the open-plan office. On the screen is a virtual representation of a roundabout. To human eyes, it is not much to look at: a simple line drawing rendered onto a road-textured background. We see a self-driving Chrysler Pacifica at medium resolution and a simple wireframe box indicating the presence of another vehicle.

Months ago, a self-driving car team encountered a roundabout like this in Texas. The speed and complexity of the situation flummoxed the car, so they decided to build a look-alike strip of physical pavement at a test facility. And what I’m looking at is the third step in the learning process: the digitization of the real-world driving. Here, a single real-world driving maneuver—like one car cutting off the other on a roundabout—can be amplified into thousands of simulated scenarios that probe the edges of the car’s capabilities.

Scenarios like this form the base for the company’s powerful simulation apparatus. “The vast majority of work done—new feature work—is motivated by stuff seen in simulation,” Stout tells me. This is the tool that’s accelerated the development of autonomous vehicles at Waymo, which Alphabet (née Google) spun out of its “moon-shot” research wing, X, in December of 2016.

If Waymo can deliver fully autonomous vehicles in the next few years, Carcraft should be remembered as a virtual world that had an outsized role in reshaping the actual world on which it is based.