Defense tech enters a new era: the case of Anthropic and the DOD

According to reports from the Wall Street Journal, the Defense Department used Anthropic's Claude Ai, via its Palantir contract, to help with the attack on Venezuela and capture former President Nicolás Maduro. Photo illustration by Michael M. Santiago/Getty Images

This dispute is likely to continue reverberating throughout the defense technology and policy worlds.

Defense technology often attracts people who are inclined to pay attention to the various stages of a normal technology’s lifecycle: its technical underpinnings and development; its relationship with existing techniques; the intended applications its development seeks to serve; and the process of testing and validating a system’s performance such that it is suitable for real-world deployment and operation. Artificial Intelligence (AI) is no exception.

Recent events indicate that this lens, by itself, is becoming inadequate for understanding the developmental trajectories and practical impacts of AI in U.S. defense. (Editor’s note: Forecast International, like Nextgov/FCW, is owned by GovExec.)

The dispute between Large Language Model (LLM) vendor Anthropic and the U.S. Department of Defense (DoD) is unprecedented. The capabilities of such models are becoming secondary to a broader trend: the relationship between the DOD and private-sector firms working on the frontier of this dual-use technology is characterized by the former’s perception that the latter’s technology is indispensable for their own ends.

This piece provides a thorough timeline of events, including comments on the reported use of Anthropic's "Claude" model in U.S. kinetic attacks in Iran. At the same time, the new position that the DOD has taken on critical dual-use technology like AI is illustrated in connection with the reliability traditionally expected of such technology, arguing that maximum operational access to this technology increasingly takes precedence over these traditional concerns.

What we know so far

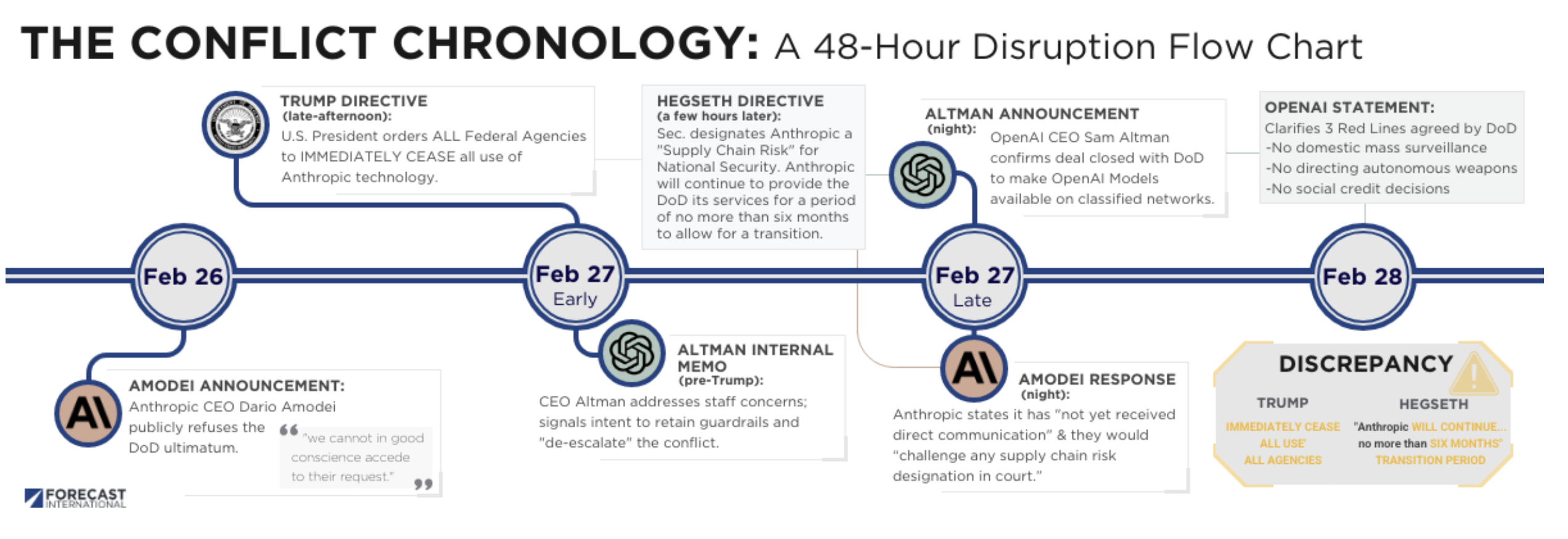

On February 26th, Dario Amodei, CEO of Anthropic, announced the company’s decision in response to the DoD’s ultimatum on the use of the company’s technology: “we cannot in good conscience accede to their request.”

The announcement followed a quick succession of reports indicating that Anthropic and the DOD were at odds over the use of the technology that Anthropic was contracted by the DOD to develop in July 2025.

The following day, in late-afternoon, U.S. President Donald Trump posted on Truth Social, indicating that Anthropic would escape the most significant consequences while nevertheless severing the company’s relationship with the U.S. government: “Therefore, I am directing EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic’s technology. We…will not do business with them again!”

The minimum implication of President Trump’s directive — should his post translate into a binding federal directive — is that Anthropic’s contracts with the DOD and broader U.S. federal government were effectively terminated. Likewise, any uses of the models by federal government employees should have ceased.

However, a couple of hours later on the same day, U.S. Secretary of Defense Pete Hegseth posted on X: “…I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security…Anthropic will continue to provide the Department of War its services for a period of no more than six months to allow for a seamless transition to a better and more patriotic service.”

Although Secretary Hegseth did not invoke the Defense Production Act, it appears that only one of his directives is possible — either compel other DOD contractors to excise Anthropic’s products from their DoD-related work or continue the use of Anthropic’s models for some period of time.

Amodei responded that night noting that Anthropic had “not yet received direct communication” from the DOD on these decisions, and forcefully stated that they would “challenge any supply chain risk designation in court.”

It is this impending court challenge that will determine the exact course of action taken. Technically, should Secretary Hegseth’s supply chain designation hold before the courts make their final decision, then all DOD uses of Anthropic’s products will begin the months-long process of disentangling these models from DOD workflows and systems. Also in this scenario, DOD contractors would be compelled to excise Anthropic’s products from their work for the DoD, too, though only their DOD work (rather than a given contractor’s work wholesale).

This scenario represents a costly one for Anthropic, as even this temporary designation would disrupt the relationships Anthropic has built with a formidable customer base, particularly in recent months following the release of its Opus 4.5 and Opus 4.6 models.

If Secretary Hegseth’s supply chain risk designation order is stayed by a federal judge as proceedings play out, then the immediate disruption to both DOD and contractor workflows will be delayed. For how long depends on the outcome of the court process. In this scenario, one might expect to see additional jabs at Anthropic made directly or indirectly by the DOD as proceedings unfold. Alternatively, though less likely, an accumulation of pressure from other industry actors might see efforts to reduce the temperature.

The supply chain risk designation and six-month grace period are, nevertheless, seemingly incompatible; it is unclear how a federal court will navigate them.

OpenAI’s DOD maneuver

As this saga was unfolding, an open letter was published last week by employees of OpenAI and Google — but not formally associated with either company — expressing support for the “red lines” that Anthropic established in its relationship with the DoD, and urged their companies’ leaders to “refuse the Department of War’s current demands for permission to use our models for domestic mass surveillance and autonomously killing people without human oversight.”

OpenAI CEO Sam Altman internally responded to his staff’s concerns on the 27th, before President Trump’s post, noting his intention to both retain the guardrails Anthropic sought on its own models when used in classified settings and to “de-escalate” the Anthropic-DoD dispute.

OpenAI is, for what it is worth, substantially less motivated by safety concerns than rival Anthropic (a difference rooted in its history). Altman specifically is an (in)famously skilled operator, having regained his position as CEO of OpenAI after a brief termination by the OpenAI Board of Directors in 2023.

Perhaps unsurprisingly, then, Altman posted on X on the night of the 27th that OpenAI closed a deal with the DOD to make OpenAI’s models available on classified networks. Bizarrely, Altman included the following detail: that the DOD accepted OpenAI’s “red lines,” which Altman suggested other AI firms — like Anthropic — would accept.

Amodei previously confirmed that Anthropic’s red lines were: (1) The use of its AI products for mass domestic surveillance (which is explicitly distinguished from “lawful foreign intelligence and counterintelligence missions”); and (2) The use of its AI products for fully autonomous weapons (which is explicitly distinguished from “partially” autonomous weapons currently in use in Ukraine).

It is unclear why the DOD would accept these red lines in the case of OpenAI but not Anthropic, save for some differing interpretation therein.

Some minor clarity is provided by OpenAI’s February 28th statement on its DOD deal, where it lays out three red lines to which the DOD is said to have agreed (below is a direct quote):

- No use of OpenAI technology for mass domestic surveillance.

- No use of OpenAI technology to direct autonomous weapons systems.

- No use of OpenAI technology for high-stakes automated decisions (e.g. systems such as “social credit”).

OpenAI distinguishes these red lines from reliance on a company’s usage policies to guide defense deployments, noting that the agreement specifies deployment of OpenAI’s models is exclusively cloud-based and accompanied by the company’s “full discretion over our safety stack…” An excerpt of the contract is provided in which DOD Directive 3000.09 on autonomy in weapon systems is referenced as a standard for the usage of OpenAI’s models.

Notably included in this statement is a blunt, single-sentence opposition to the designation of Anthropic as a supply chain risk.

Interestingly, Anthropic made a change to its Responsible Scaling Policy that voluntarily governs the company’s efforts to mitigate catastrophic AI risks on February 25th. The new policy does away with the prior (self-imposed) restrictions on the training of AI models whose risks cannot be fully mitigated in advance, indicating that the company likely believed there was room for compromise with the DOD before OpenAI’s maneuver.

This shift is consistent with reporting that Anthropic was negotiating with the DOD through last week, understanding themselves to be on track for a deal, right until the moment of President Trump's announcement on Friday. (Anthropic had privately rejected a proposal to keep their models on the cloud rather than instantiated in the weapon itself.)

Altman has since suggested OpenAI's deal was "rushed." OpenAI lead researcher Noam Brown posted on X on March 3rd:

@OpenAI will not be deploying to the NSA or other DoW intelligence agencies for now, so that there's time to address potential surveillance loopholes through the democratic process.

Note that Brown's comments target intelligence agencies, and not lethal autonomous weapon systems (LAWS).

Importantly, there is no indication that OpenAI's contract is terminated, nor is it clear that a substantive adjustment to its language is immediately forthcoming based on this announcement, beyond certain language already slated for an amendment. The timeline for intelligence gathering or analysis using OpenAI's models is therefore an open question.

In any event, OpenAI’s maneuver into the DOD in part serves to further embed OpenAI’s models in classified networks where Anthropic previously held a firm lead.

Reliability: second to operational access?

The past week or so has seen discussions of LAWS with reference to LLMs. It is often unclear what commentators mean by this, as such ideas are, largely, hypothetical.

Still, the past week has shown that traditional DOD concerns with the reliability of mission- and safety-critical defense technology - like LAWS - have been secondary to operational access therein.

Among the more striking aspects of Amodei’s February 26th announcement is a frank admission: the technology the company develops — long-term visions notwithstanding — is not reliable enough to safely operate in domains where fundamental civil liberties or life itself are at stake. To be sure, the qualifier “today” accompanies these remarks. But consider Amodei’s remark:

But today, frontier AI systems are simply not reliable enough to power fully autonomous weapons…fully autonomous weapons cannot be relied upon to exercise the critical judgment that our highly trained, professional troops exhibit every day. They need to be deployed with proper guardrails, which don’t exist today.

This comment is all the more striking when one realizes Amodei need not have made it — the company is riding a commercial high with a product - Claude Code - of some significant popularity within the DOD and elsewhere. Amodei could have opposed the use of Anthropic’s technology for LAWS on moral grounds without including this particular detail.

Amodei’s comment was echoed by U.S. Air Force Gen. Jack Shanahan (ret.), who wrote on LinkedIn:

No LLM, anywhere, in its current form, should be considered for use in a fully lethal autonomous weapon system…Despite the hype, frontier models are not ready for prime time in national security settings. Over-reliance on them at this stage is a recipe for catastrophe.

These views are relatively in sync with one another, revolving around the suitability of a given class of AI models (transformer-based LLMs) for a given set of tasks (those that are safety- or mission-critical in nature).

LLMs do not provide guarantees on their performance. This is a banal fact about such models. This fact is routinely band-aided through scaffolding in which the LLM is situated in a structure that may have a separate and domain-specific (typically limited) model verify its outputs; prompting techniques to guide the LLM to more appropriate outputs; or wholesale restrictions on their use in tasks where the margin for acceptable error is essentially zero.

The general point has reverberated throughout the DOD and industry. It is why, in August 2025, Palantir Chief Revenue Officer Ryan Taylor bluntly stated: “LLMs, on their own, are at best a jagged intelligence divorced from even basic understanding.” In February of this year, U.S. Air Force Lt. Col. Matthew Jensen suggested that an LLM could conceivably be used as an interface with which human pilots can debrief on the activity of a Collaborative Combat Aircraft’s autonomy suite, implying a separation of critical systems.

Interestingly, although the DOD is traditionally concerned with the reliability of technologies deployed in mission-critical domains, it is primarily Anthropic, and not the DoD, that has expressed this concern in the current context. Rather than indicating that the DOD is unconcerned with such matters, it is more likely that the locus of dispute as perceived by DOD leadership is not the question of whether Anthropic’s technology is sufficiently reliable, but the question of whether the DOD has maximum operational access to it.

Operational access to Claude and the Iran strikes

Consistent with this view, The Wall Street Journal reported on Sunday that the DOD had used Anthropic’s Claude model in support of the U.S.’s attacks on Iran (undertaken in coordination with Israel). The report notes that U.S. Commands globally, including U.S. Central Command - with the Middle East under its purview - use this tool. For Iran specifically, it states that Central Command used Claude for “intelligence assessments, target identification and simulating battle scenarios…”

To what extent Claude informed these activities and substantively shaped their consequences is unclear, as was the case for similar reporting on Claude’s use in the capture of former Venezuelan President Nicolás Maduro (e.g., there is a range of possible ways in which such a tool could be used for intelligence assessments, varying dramatically in their practical significance).

Note, too, that this report indicates that Anthropic's supply chain risk designation was not in force at the time of these strikes, or it was circumvented - a potential fact of relevance should Anthropic proceed with their lawsuit. As of March 2nd, U.S. cabinet agencies began ceasing their uses of Anthropic's AI products in favor of OpenAI's and Google's. The State Department reportedly cited President Trump's directive as justification.

That said, it is fascinating that Anthropic’s model would be used in target identification. Coupled with the lack of performance guarantees offered by LLMs, and the relatively limited information available, this use bears some resemblance to the Israeli Defense Forces' (IDF) own use of AI in target acquisition.

The IDF expressed concern over the past decade (at least) for the ability to acquire targets efficiently during wartime, born of the Israeli Air Force’s “shortage” of identifiable and valuable targets in prior conflicts. The General Staff Targeting Directorate was established in 2019 explicitly to link data science and machine learning for this end.

The use of two AI systems (of some largely unspecified but likely simple designs) called “Hasbora” and “Lavender” were recruited by the IDF after the October 2023 Hamas attack for the acquisition of infrastructure targets and individual targets in Gaza, respectively.

Just to say: the use of AI in target acquisition and other activities during extended combat operations is not separable from goals and standards for execution therein. Whether Anthropic’s tools were significantly used in this context is only in part a question of the model’s capabilities; the other side of this coin is whether these human ends require the reliability Amodei suggested is unavailable, a determination exclusively made by the human personnel and commanders involved. The technology does not itself set these standards, and could not enforce those that exist.

That said, sharing information that Claude was used directly in the service of kinetic attacks — although not breaching the original red lines set out by the company — might be understood as a kind of psychological warfare against Anthropic itself, as the DOD asserts its access to these models. Researchers at this firm routinely characterize their work developing Claude as one might when cultivating virtues in an impressionable human. A psychological effect on them would be anticipated by those within the DOD when sharing the news with reporters.

Defense tech's future beckons

Secretary Hegseth’s apparent willingness to designate Anthropic, an American defense contractor, a supply chain risk is unprecedented. Whatever the outcome of any impending court challenge, the DOD has effectively indicated a willingness to go to extreme lengths in gaining access — both formal and operational — to state-of-the-art, dual-use defense technology. The stakes and implications of this development are significantly greater than earlier precedents for DoD-Silicon Valley tumult, including Project Maven.

This dispute is likely to continue reverberating throughout the defense technology and policy worlds. Indeed, one finds Dean Ball, a lead drafter of the Trump administration's AI Action Plan, writing in reference to this dispute with dread.

A Frankenstein’s Monster effect, in which the developers of LLMs have found their pronouncements on the world-historical nature of their technologies acceded to, now defines their defense relationships in ways those same developers likely did not anticipate.