What Will Happen When Computer Chips Stop Getting Smaller?

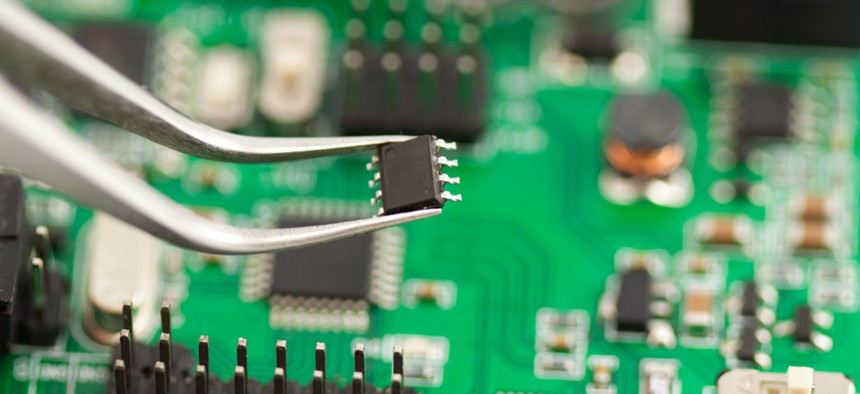

Ekaterina Kondratova/Shutterstock.com

That’s the $3 billion question.

IBM is spending $3 billion to figure out what will happen when computer power stops doubling every few years.

The strange phenomenon -- called Moore’s Law -- was described in 1965 by Intel cofounder Gordon Moore, who predicted the number of circuits that can fit on a processor would double every year as the technology evolved.

Ten years later, he had reason to believe the rate would slow to doubling every one to two years. Somehow, the pattern has held ever since, helping computers go from room size to desk size to lap size to phone size to nano size.

Although some disagree, another former Intel executive last year predicted Moore’s Law will cease to hold after about 2020, partly on the assumption that things can’t keep getting smaller forever, and they’re already pretty small.

“The current generation of chips, due later this year, have reached 14 nanometers,” according to Arik Hesseldahl of Re/code. “For a sense of scale, that’s only a tad thicker than the wall of an individual cell.”

Chips can get a little smaller, but what happens after that is the $3 billion question. The physical limits of silicon are what are holding up the shrinking process, so it might be time for a new material. Or maybe computing itself will drive the changes; IBM is looking to quantum and neurosynaptic computing, for instance.

If eternal business growth depends on chips eternally shrinking, the future will be interesting to watch. Hopefully, it’s no big thing.

(Image via Ekaterina Kondratova/Shutterstock.com)

NEXT STORY: Ex-Officer Wants @CIA to Stop With the Jokes