The Computer That Predicted the U.S. Would Win the Vietnam War

A cautionary tale about the dangers of big data

At just about the halfway point of Lynn Novick and Ken Burns’s monumental documentary on the Vietnam War, an army advisor tells an anecdote that seems to sum up the relationship between the military and computers during the mid-1960s.

“There’s the old apocryphal story that in 1967, they went to the basement of the Pentagon, when the mainframe computers took up the whole basement, and they put on the old punch cards everything you could quantify. Numbers of ships, numbers of tanks, numbers of helicopters, artillery, machine gun, ammo—everything you could quantify,” says James Willbanks, the chair of military history at U.S. Army Command and General Staff College. “They put it in the hopper and said, ‘When will we win in Vietnam?’ They went away on Friday and the thing ground away all weekend. [They] came back on Monday and there was one card in the output tray. And it said, 'You won in 1965.’”

This is, first and foremost, a joke. But given the emphasis that Secretary of Defense Robert McNamara placed on data and running the number—I began to wonder if there was actually some software that tried to calculate precisely when the United States would win the war. And if it was possible that it once gave such an answer.

The most prominent citation for the apocryphal story comes in Harry G. Summers’ study of the war, American Strategy in Vietnam: A Critical Analysis. In this telling, however, it is not the Johnson administration doing the calculation but the incoming Nixon officials:

When the Nixon Administration took over in 1969 all the data on North Vietnam and on the United States was fed into a Pentagon computer—population, gross national product, manufacturing capability, number of tanks, ships, and aircraft, size of the armed forces, and the like. The computer was then asked, “When will we win?” It took only a moment to give the answer: “You won in 1964!”

He said “the bitter little story” circulated “during the closing days of the Vietnam War.” It made the point that there “was more to war, even limited war, than those things that could be measured, quantified, and computerized.”

There’s no doubt that Vietnam was quantified in new ways. McNamara had brought what a historian called “computer-based quantitative business-analysis techniques” that “offered new and ingenious procedures for the collection, manipulation, and analysis of military data.”

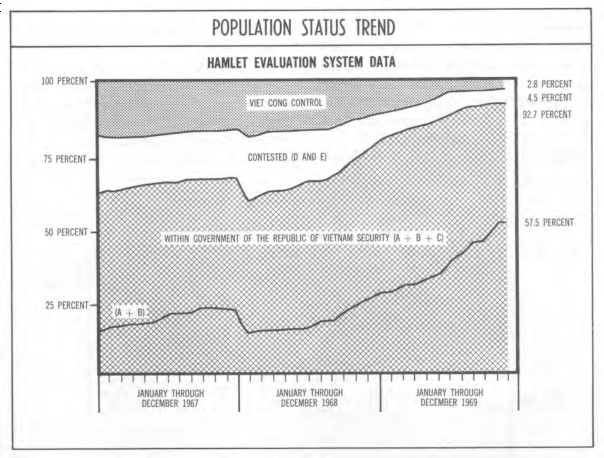

In practice, this meant creating vast amounts of data, which had to be sent to computing centers and entered on punch cards. One massive program was the Hamlet Evaluation System, which sought to quantify how the American program of “pacification” was proceeding by surveying 12,000 villages in the Vietnamese countryside. “Every month, the HES produced approximately 90,000 pages of data and reports,” a RAND report found. “This means that over the course of just four of the years in which the system was fully functional, it produced more than 4.3 million pages of information.”

Once a baseline was established, decision makers could see progress. And they wanted to see progress, which created pressure on data gatherers to paint a rosy picture of the portrait on the ground. The slippage between reality and the model of reality based on data became one of the key themes of the war.

“The crucial factors were always the intentions of Hanoi, the will of the Viet Cong, the state of South Vietnamese politics, and the loyalties of the peasants. Not only were we deeply ignorant of these factors, but because they could never be reduced to charts and calculations, no serious effort was made to explore them,” wrote Richard N. Goodwin, a speechwriter for Presidents Kennedy and Johnson. “No expert on Vietnamese culture sat at the conference table. Intoxicated by charts and computers, the Pentagon and war games, we have been publicly relying on and calculating the incalculable.”

All of which the “apocryphal story” condenses into a biting joke.

But was there actually a computer somewhere in the Pentagon that was cranking out “When will we win the war?” calculations?

On October 27, 1967, The Wall Street Journal ran an un-bylined blurb from its Washington, D.C., bureau on the front page talking about a “victory index.”

U.S. strategists seek a “victory index” to measure progress in the Vietnam War. They want a single statistic reflecting enemy infiltration, casualties, recruiting, hamlets pacified, roads cleared. Top U.S. intelligence officials, in a recent secret huddle, couldn’t work out an index; they get orders to keep trying.

Now, a victory index is not quite a computer program you can ask “When will we win the war?” But it’s pretty close! A chart could be plotted. Projections could be made from current progress to future ultimate success. At the very least, we can say that officials tried to build a system that could be the kernel of truth at the center of a certainly embellished story.

And it doesn’t seem out of the question that the specific error—showing the United States had already won—could have actually occurred. As the intelligence officials tried different models to make sense of all their numbers, it certainly seems possible that some statistical runs would, in fact, return the result that the peak of the victory index had already occurred. That the war had been won.

In a world besotted by data, the apocryphal story about the Pentagon computers reminds us that the model is not the world, and that ignoring that reality can have terrible consequences.