Government delayed measuring rate of oil flow for five weeks

High-tech sensors could have been deployed to accurately estimate the amount of oil escaping into the Gulf of Mexico, but U.S. officials thought the deep-water well would have been capped quickly.

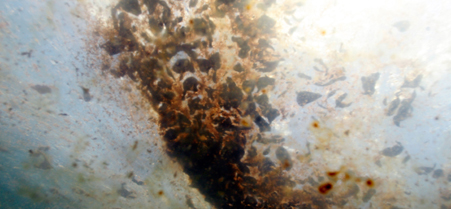

Rich Matthews/AP

Rich Matthews/AP

Updated at 5:25 p.m., Wednesday, June 23.

The federal government delayed by five weeks deploying high-tech sensors that could accurately measure the how much oil was spewing out of the BP well in the Gulf of Mexico because officials thought the well would be capped or shut down within a relatively short time span, according to contract documents the U.S. Coast Guard released on Monday.

The National Atmospheric and Oceanic Administration and BP downplayed the amount of oil gushing into the Gulf, at one time estimating the flow rate was about 5,000 barrels a day after the Deep Horizon drill rig sank on April 22. But officials with the Coast Guard Research and Development Center in New London, Conn., decided on May 26 they needed an accurate assessment because BP's estimates " are not consistent with other estimations in the scientific community," they noted in a document titled "Justification for Other Than Full and Open Competition."

Last week, the government estimated between 35,000 and 60,000 barrels a day were escaping into the Gulf.

The Coast Guard center said it awarded in May a sole-source contract worth $191,100 to Woods Hole Oceanographic Institution, which will use advanced sonar systems to measure the oil flow, because "delays in measuring the flow rate and other available characteristics are having a detrimental effect on the estimation on oil released into the Gulf of Mexico."

In its justification for the sole-source contract the center's officials said, "Delays in measurement of the oil flow are no longer acceptable and require an urgent response."

Lamar McKay, president of BP America, told the Senate Transportation and Infrastructure Committee on May 19, "This leak is not measurable through technology we know."

On the same day McKay was testifying on the Hill, Richard Camilli, associate scientist for applied ocean physics and engineering at Woods Hole, told the House Subcommittee on Energy and Environment that the oceanographic institute could adapt imaging multibeam sonar to map the seabed to monitor the flow rate.

He said he wrote an e-mail to BP officials on May 4 proposing to use the sonar technology and an acoustic Doppler current profiler to produce maps of particles such as oil that might be suspended in water. BP tentatively accepted the proposal on May 5 and then rejected it the following day, informing Camilli the company had completed developing an undersea containment structure ahead of schedule. That equipment later failed to stop the leaking oil.

Under its contract with the Coast Guard, Woods Hole said it deployed the same technology aboard a remotely operated vehicle to the well site on May 31. On June 10, the government used that data and other research to increase its estimate of the leaking oil to between 25,000 and 30,000 barrels a day. By June 15, the government increased its estimate again , this time to between 35,000 and 60,000 barrels a day.

To ensure it has technology to manage future oil disasters, the Coast Guard Research and Development Center asked on June 4 for research proposals on a range of technologies, including tracking and reporting oil that is the surface or submerged and detecting submerged oil. The Coast Guard said it will accept proposals until June 3, 2011.

NEXT STORY: BG Sutton Quietly Leaves PTSD/TBI Job